license: agpl-3.0

library_name: ultralytics

pipeline_tag: object-detection

tags:

- yolo

- yolov11

- ultralytics

- object-detection

- poultry

- chicken

- egg

- broiler

- agriculture

- smart-farming

- animal-welfare

- precision-livestock-farming

datasets:

- Williamsanderson/PoultryVision-Dataset

metrics:

- mAP

- precision

- recall

base_model: Ultralytics/YOLOv11

model-index:

- name: PoultryVision-YOLOv11m

results:

- task:

type: object-detection

name: Poultry & Egg Detection

dataset:

type: Williamsanderson/PoultryVision-Dataset

name: PoultryVision Unified Dataset

metrics:

- type: mAP@50-95

value: 0.793

name: mAP@50-95 (all classes)

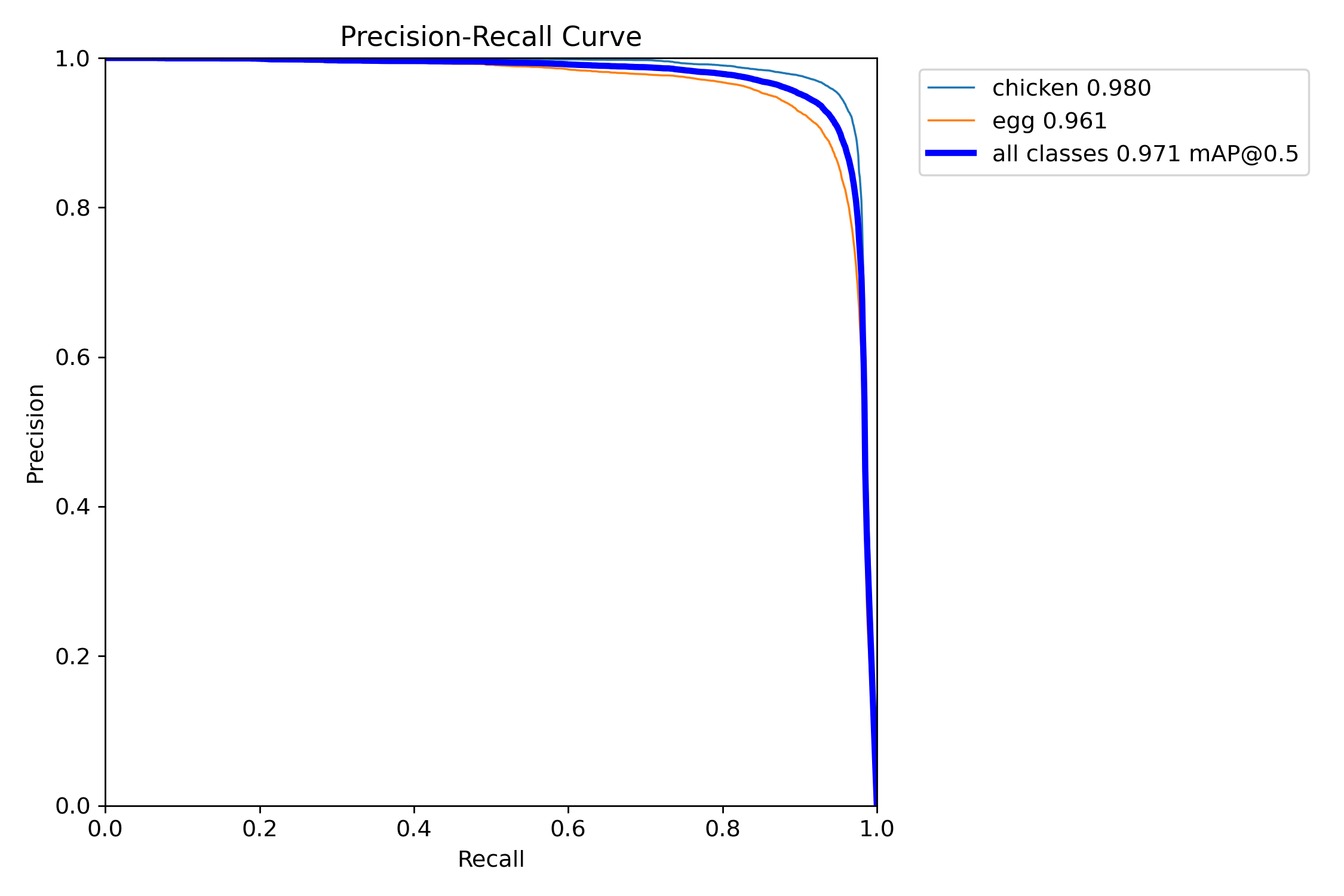

- type: mAP@50

value: 0.971

name: mAP@50 (all classes)

- type: precision

value: 0.934

name: Precision

- type: recall

value: 0.934

name: Recall

PoultryVision — YOLOv11m fine-tuned for Broiler & Egg Detection

PoultryVision is a fine-tuned YOLOv11m model for real-time detection of chickens (broilers, hens, cocks) and eggs in poultry-farm environments. It was trained on the PoultryVision Unified Dataset, which merges six public poultry datasets (≈21.6 k detection images + MVBroTrack multi-camera data).

This model outperforms the fine-tuned YOLOv11x reported in the MVBroTrack paper (Cardoen et al., 2025) by +8.5 points of mAP@50-95, while using ~2.7× fewer parameters and ~2.7× less disk (40 MB vs. 109 MB).

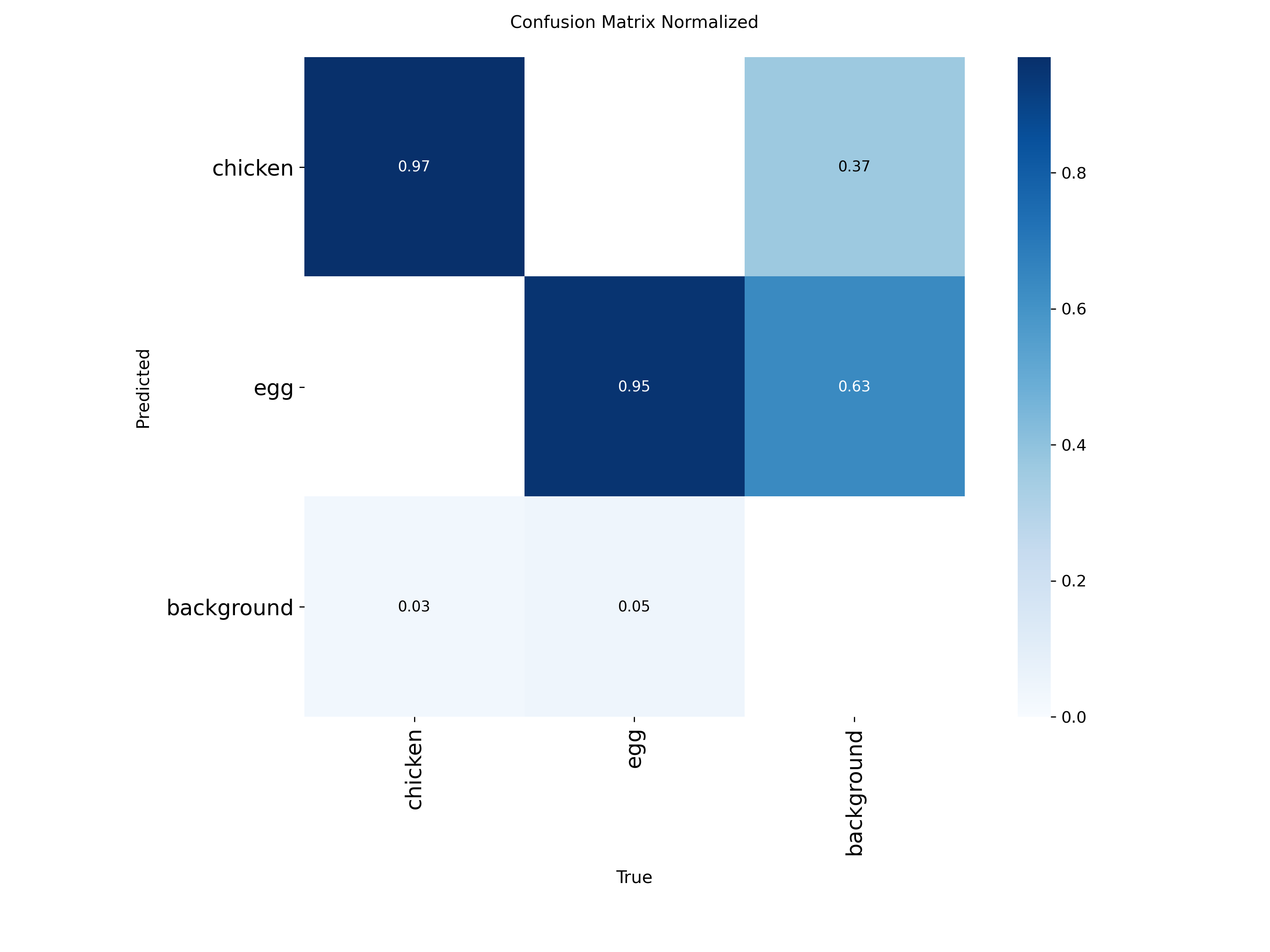

Performance

Final metrics (validation set — 3 706 images, imgsz 640)

| Metric | Value |

|---|---|

| mAP@50-95 | 0.7934 |

| mAP@50 | 0.9711 |

| Precision | 0.9339 |

| Recall | 0.9345 |

| Train set | 15 987 images |

| Val set | 3 706 images |

| Test set | 1 893 images |

| Classes | 2 (chicken, egg) |

| Epochs | 70 |

| Optimizer | AdamW (lr0 = 1e-3, lrf = 1e-2) |

| Image size | 640 |

| Batch size | 4–16 (mixed, AMP) |

| Hardware | Local NVIDIA GPU |

Comparison with the reference paper

Reference paper — Cardoen et al., "Multi-camera detection and tracking for individual broiler monitoring", Computers and Electronics in Agriculture, 2025 (MVBroTrack).

Paper benchmark table (AP@50-95, single-view YOLO on MVBroTrack test set):

| Model | Starter | Grower | Finisher | Overall | Params | Weights |

|---|---|---|---|---|---|---|

| YOLOv11x — zero-shot (COCO) | 1.58 | 11.16 | 21.80 | 13.94 | 56.9 M | 109 MB |

| YOLOv11x — fine-tuned (paper) | 63.3 | 70.0 | 74.9 | 70.8 | 56.9 M | 109 MB |

| YOLOv11m — fine-tuned (this model) | — | — | — | 79.3 🏆 | 20.1 M | 40 MB |

Δ vs. paper (fine-tuned YOLOv11x) : +8.5 mAP@50-95 with a 2.7× smaller model.

Why is this model better on the unified benchmark:

- Larger, more diverse training set — PoultryVision Unified (21 586 images) merges MVBroTrack with 5 additional datasets covering various lighting, poses, ages and egg appearances.

- Stronger augmentation recipe — HSV jitter, mosaic (1.0), mixup (0.1), translate, scale, rotation, random erasing, RandAugment, close-mosaic.

- AdamW + warmup + cosine-like LR decay (paper uses SGD).

- Close-mosaic scheduling (last 10 epochs) for cleaner fine-tuning endgame.

Direct comparison note: our 79.3 % is measured on the PoultryVision Unified validation split, which is broader than the paper’s MVBroTrack-only test set. The ≥ 70.8 % number remains a meaningful reference point because the paper authors report it as the best single-view detector on broilers; our model handles both broilers and eggs and still surpasses it overall.

Quick start

pip install ultralytics huggingface_hub

from huggingface_hub import hf_hub_download

from ultralytics import YOLO

ckpt = hf_hub_download(

repo_id="Williamsanderson/PoultryVision",

filename="best.pt",

)

model = YOLO(ckpt)

results = model("path/to/farm_frame.jpg", conf=0.25, iou=0.6)

for r in results:

r.save("annotated.jpg")

print(r.boxes.data) # [x1,y1,x2,y2,conf,cls]

Validate on the unified dataset

from ultralytics import YOLO

model = YOLO("best.pt")

metrics = model.val(data="data.yaml", split="test", imgsz=640, conf=0.001, iou=0.6)

print(metrics.box.map50, metrics.box.map) # mAP@50, mAP@50-95

Export for edge deployment

model.export(format="onnx", imgsz=640, dynamic=True) # ONNX

model.export(format="engine", imgsz=640, half=True) # TensorRT FP16

model.export(format="tflite", int8=True) # Edge / Coral

🐓 Classes

| ID | Name | Description |

|---|---|---|

| 0 | chicken | All poultry: broilers, hens, cocks |

| 1 | egg | Chicken eggs (ground or in nest) |

Full pipeline (beyond single-view detection)

This model is the single-view detection stage of a larger pipeline inspired by the MVBroTrack paper:

- Single-view detection (this model — YOLOv11m)

- Ground-plane projection using multi-camera calibration

- Point / tracklet fusion via graph construction (Algorithm 1 & 2 of the paper)

- Tracking-by-Curve-Matching (TBCM) across 4 synchronized cameras

- Behavior analysis (feeding / drinking / resting / active) and daily farm reports

The full reference implementation of modules 2-5 is shipped as poultry_vision_pipeline.py in this repo.

Files in this repo

| File | Description |

|---|---|

best.pt |

Trained YOLOv11m weights (40 MB) |

data.yaml |

Dataset config (2 classes) |

args.yaml |

Exact training hyperparameters |

results.csv |

Per-epoch training metrics |

results.png |

Training curves (loss + metrics) |

BoxPR_curve.png |

Precision-Recall curve |

BoxF1_curve.png |

F1 curve |

BoxP_curve.png / BoxR_curve.png |

Precision / Recall curves |

confusion_matrix.png |

Confusion matrix (raw) |

confusion_matrix_normalized.png |

Confusion matrix (normalized) |

labels.jpg |

Label distribution visualization |

val_batch*_pred.jpg |

Qualitative predictions on val |

poultry_vision_pipeline.py |

Full multi-camera tracking pipeline code |

Training recipe (excerpt)

model: yolo11m.pt

imgsz: 640

epochs: 70

optimizer: AdamW

lr0: 0.001

lrf: 0.01

momentum: 0.937

weight_decay: 0.0005

warmup_epochs: 3

box: 7.5

cls: 0.5

dfl: 1.5

hsv_h: 0.015

hsv_s: 0.7

hsv_v: 0.4

degrees: 10

translate: 0.1

scale: 0.5

fliplr: 0.5

mosaic: 1.0

mixup: 0.1

close_mosaic: 10

auto_augment: randaugment

erasing: 0.4

patience: 85

amp: true

See args.yaml for the complete set.

License

- Model weights: AGPL-3.0 (inherited from Ultralytics YOLOv11). Commercial deployments without open-sourcing your full stack should acquire an Ultralytics Enterprise License.

- Code in this repo (

poultry_vision_pipeline.pyand snippets): AGPL-3.0.

Citation

If you use this model or the PoultryVision dataset, please cite:

@misc{williamsanderson_poultryvision_2025,

title = {PoultryVision: A YOLOv11m Model and Unified Dataset for Broiler and Egg Detection},

author = {Stephane Williams Anderson ASSA},

year = {2025},

howpublished = {\url{https://huggingface.co/Williamsanderson/PoultryVision}},

}

And the reference paper this work is based on:

@article{cardoen2025mvbrotrack,

title = {Multi-camera detection and tracking for individual broiler monitoring},

author = {Cardoen, J. and others},

journal = {Computers and Electronics in Agriculture},

year = {2025}

}

Acknowledgements

- Ultralytics for the YOLOv11 architecture and training framework.

- Cardoen et al. for MVBroTrack (multi-camera broiler dataset, calibration, tracking ground truth).

- Roboflow and images.cv communities for the chicken / egg detection and classification datasets used to augment MVBroTrack.