Instructions to use quyanh/OpenRS-GRPO with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Transformers

How to use quyanh/OpenRS-GRPO with Transformers:

# Use a pipeline as a high-level helper from transformers import pipeline pipe = pipeline("text-generation", model="quyanh/OpenRS-GRPO") messages = [ {"role": "user", "content": "Who are you?"}, ] pipe(messages)# Load model directly from transformers import AutoTokenizer, AutoModelForCausalLM tokenizer = AutoTokenizer.from_pretrained("quyanh/OpenRS-GRPO") model = AutoModelForCausalLM.from_pretrained("quyanh/OpenRS-GRPO") messages = [ {"role": "user", "content": "Who are you?"}, ] inputs = tokenizer.apply_chat_template( messages, add_generation_prompt=True, tokenize=True, return_dict=True, return_tensors="pt", ).to(model.device) outputs = model.generate(**inputs, max_new_tokens=40) print(tokenizer.decode(outputs[0][inputs["input_ids"].shape[-1]:])) - Notebooks

- Google Colab

- Kaggle

- Local Apps

- vLLM

How to use quyanh/OpenRS-GRPO with vLLM:

Install from pip and serve model

# Install vLLM from pip: pip install vllm # Start the vLLM server: vllm serve "quyanh/OpenRS-GRPO" # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:8000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "quyanh/OpenRS-GRPO", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }'Use Docker

docker model run hf.co/quyanh/OpenRS-GRPO

- SGLang

How to use quyanh/OpenRS-GRPO with SGLang:

Install from pip and serve model

# Install SGLang from pip: pip install sglang # Start the SGLang server: python3 -m sglang.launch_server \ --model-path "quyanh/OpenRS-GRPO" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "quyanh/OpenRS-GRPO", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }'Use Docker images

docker run --gpus all \ --shm-size 32g \ -p 30000:30000 \ -v ~/.cache/huggingface:/root/.cache/huggingface \ --env "HF_TOKEN=<secret>" \ --ipc=host \ lmsysorg/sglang:latest \ python3 -m sglang.launch_server \ --model-path "quyanh/OpenRS-GRPO" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "quyanh/OpenRS-GRPO", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }' - Docker Model Runner

How to use quyanh/OpenRS-GRPO with Docker Model Runner:

docker model run hf.co/quyanh/OpenRS-GRPO

Model Summary

This repository hosts model for the Open RS project, accompanying the paper Reinforcement Learning for Reasoning in Small LLMs: What Works and What Doesn’t. The project explores enhancing reasoning capabilities in small large language models (LLMs) using reinforcement learning (RL) under resource-constrained conditions.

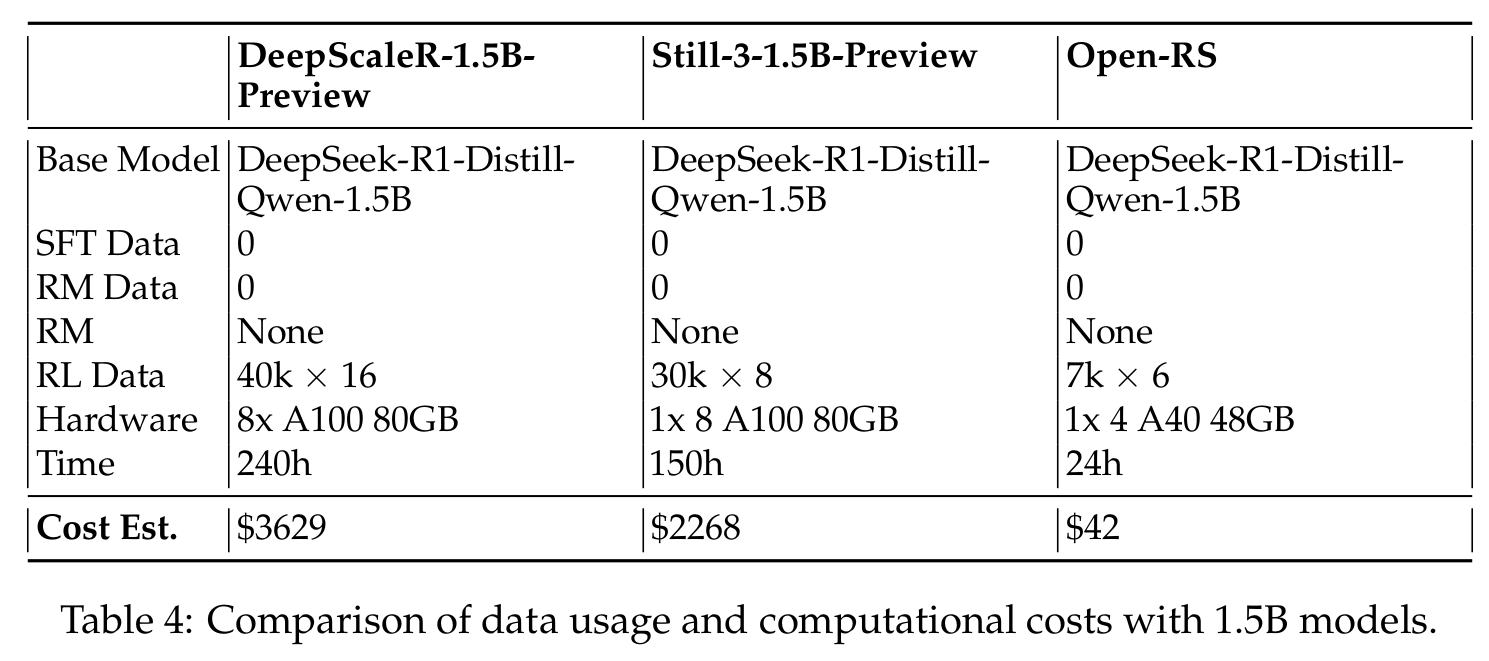

We focus on a 1.5-billion-parameter model, DeepSeek-R1-Distill-Qwen-1.5B, trained on 4 NVIDIA A40 GPUs (48 GB VRAM each) within 24 hours. By adapting the Group Relative Policy Optimization (GRPO) algorithm and leveraging a curated, compact mathematical reasoning dataset, we conducted three experiments to assess performance and behavior. Key findings include:

- Significant reasoning improvements, e.g., AMC23 accuracy rising from 63% to 80% and AIME24 reaching 46.7%, outperforming

o1-preview. - Efficient training with just 7,000 samples at a cost of $42, compared to thousands of dollars for baseline models.

- Challenges like optimization instability and length constraints with extended training.

These results showcase RL-based fine-tuning as a cost-effective approach for small LLMs, making reasoning capabilities accessible in resource-limited settings. We open-source our code, models, and datasets to support further research.

For more details, please refer our github.

Evaluation

Performance Highlights

- Open-RS1: 53.0% avg. score

- Open-RS2: 55.7% avg. score, 80.0% on AMC23

- Open-RS3: 56.3% avg. score, 46.7% on AIME24 (outperforms

o1-previewat 44.6%) - Competitive MATH-500 scores; Minerva lags behind 7B models.

Cost Efficiency

Our approach uses 7,000 samples (42,000 total outputs) and costs ~$42 on 4x A40 GPUs in 24 hours, compared to:

- 7B models:

Qwen2.5-7B-SimpleRL($1,633),Eurus-2-7B-PRIME($1,088) - 1.5B models:

DeepScaleR-1.5B-Preview($3,629),Still-3-1.5B-Preview($2,268)

Citation

If this project aids your work, please cite it as:

@misc{dang2025reinforcementlearningreasoningsmall,

title={Reinforcement Learning for Reasoning in Small LLMs: What Works and What Doesn't},

author={Quy-Anh Dang and Chris Ngo},

year={2025},

eprint={2503.16219},

archivePrefix={arXiv},

primaryClass={cs.LG},

url={https://arxiv.org/abs/2503.16219},

}

- Downloads last month

- 9

Model tree for quyanh/OpenRS-GRPO

Base model

deepseek-ai/DeepSeek-R1-Distill-Qwen-1.5B